The AI Sovereign Cloud Data Centre Power Models

Hyperscale operators, led by Amazon Web Services (AWS), Microsoft Azure, Google Cloud Platform, and Alibaba Cloud, represent 64% of the AI data centre market in 2024 (Precedence Research, 2025). These titans of digital infrastructure, along with NVIDIA and Oracle, have committed USD 450+ billion in near-term investments for sovereignty. If intelligence is becoming infrastructure, the real question is simple: Are we building it deliberately, or surrendering it by default?

Read more on Citiesabc

How do we define AI sovereignty?

Sovereignty is a key concept and it will be a critical set of solutions for any country that will have to build its own AI infrastructure and large LLMs.

In another scenario, sovereignty also means that countries will run someone else’s LLM on their own GPUs so that they can make sure their data doesn’t leave their country.

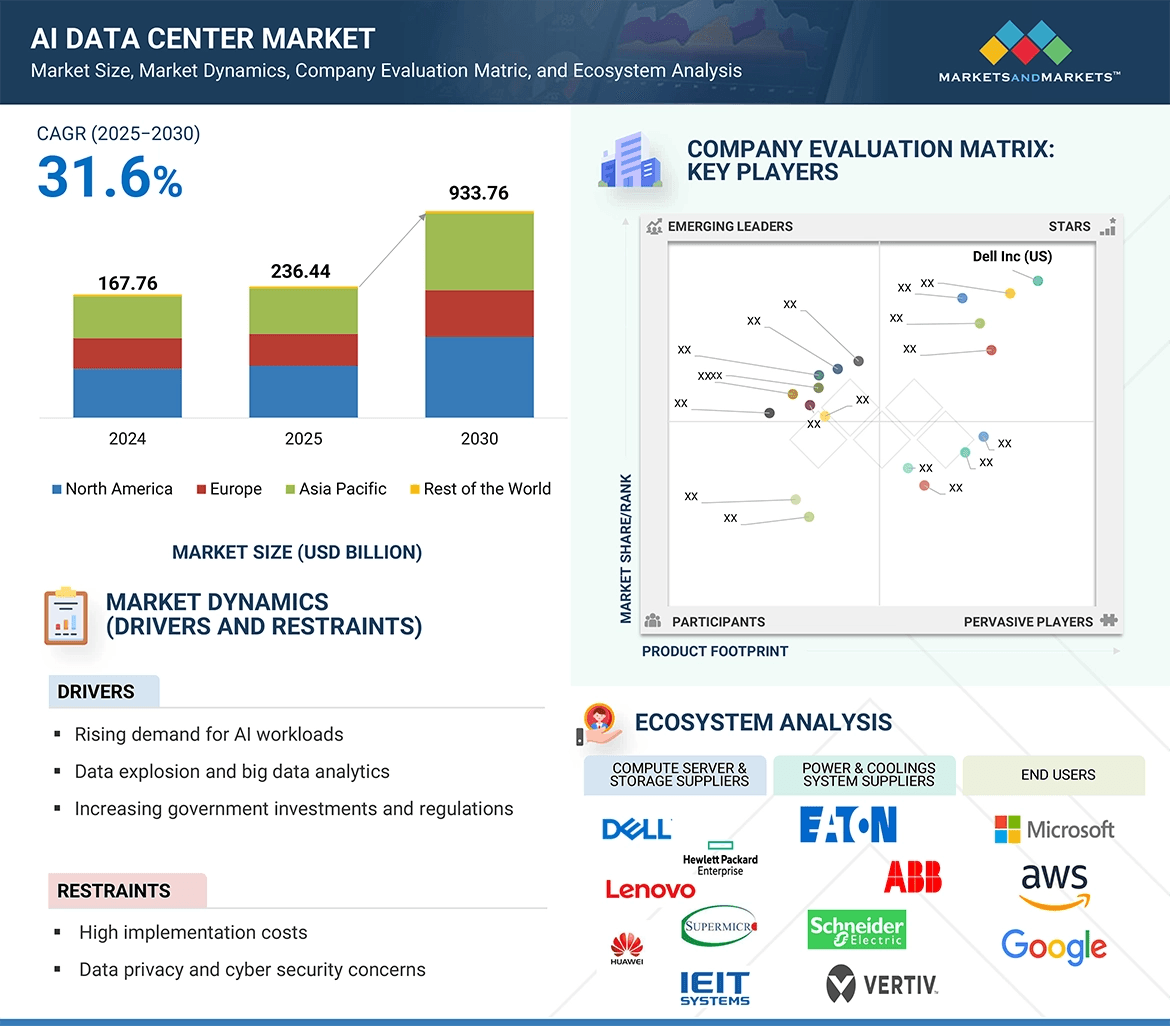

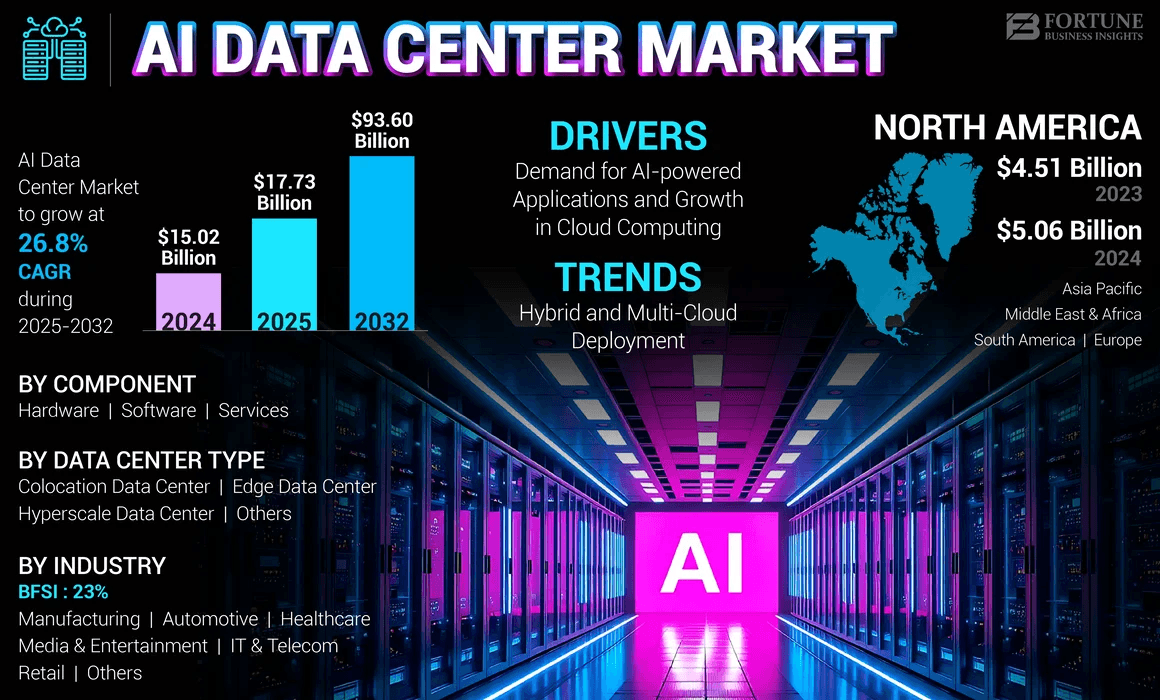

The global AI data centre market stands at the threshold of unprecedented transformation, poised to surge from USD 167.76 billion in 2024 to USD 933.76 billion by 2030, representing a compound annual growth rate (CAGR) of 31.6% (Markets and Markets, 2025). This exponential growth trajectory transcends mere economic opportunity—it embodies humanity’s collective leap toward a future where computational intelligence serves as the crucible for human potential.

Each data centre, each rack of processors, each photon of light travelling through fibre-optic cables represents not merely infrastructure, but the externalisation of human consciousness itself, the physical manifestation of our species’ insatiable drive to understand, to create, to transcend.

Some Key Facts:

- Global data centre market projected to reach USD 165-934 billion by 2030-2034

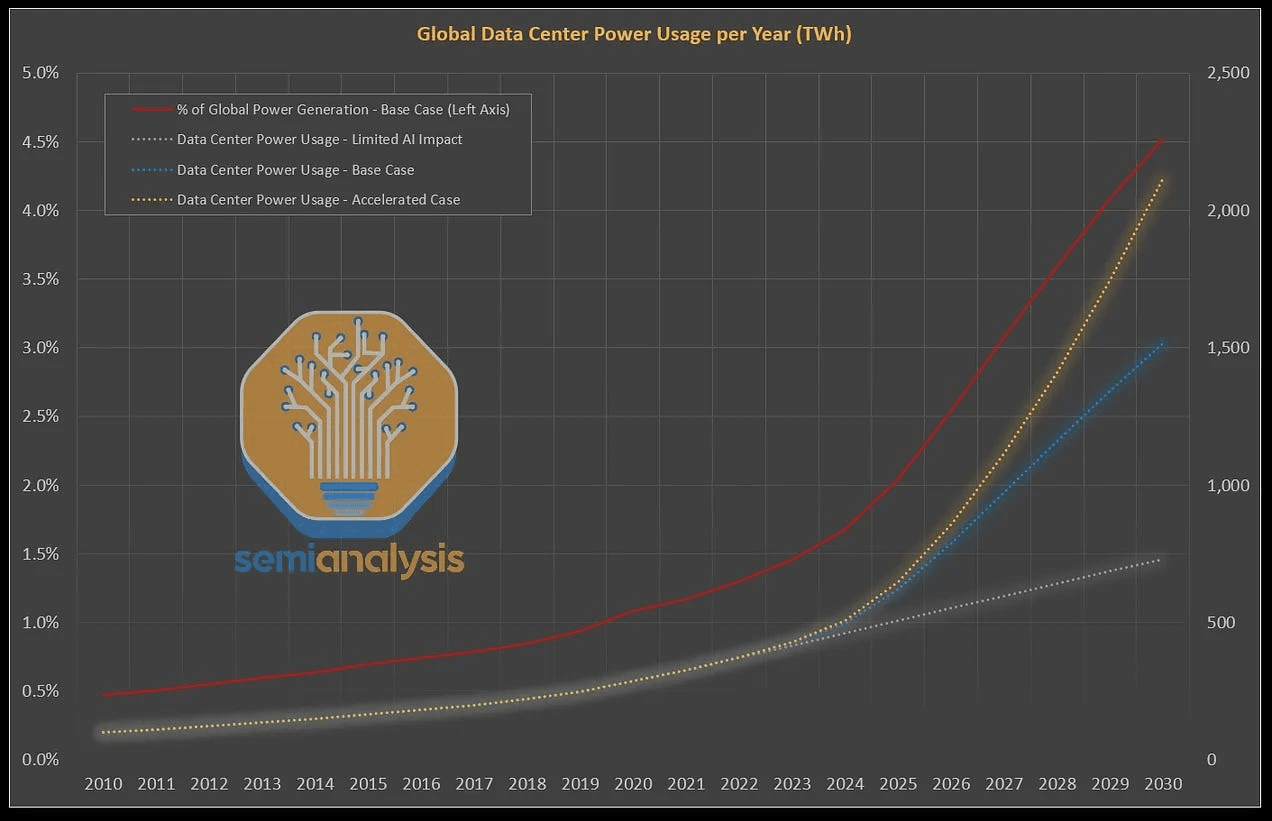

- Energy consumption expected to rise from 415 TWh (2024) to 1,200 TWh (2035)

- Strategic government investments exceeding USD 500 billion (Stargate Project)

- Liquid cooling technology achieving 2-4 year ROI with 25-35% energy efficiency gains

The Dawn of Cognitive Infrastructure

We stand at a singular moment in human history, the 5th Industrial Revolution where the boundary between human cognition and machine computation dissolves into collaborative synthesis.

The AI data centre is not merely a building filled with servers; it is the cathedral of our age, the temple where humanity’s accumulated wisdom is distilled, processed, and amplified through silicon and light.

The thesis that animates this analysis is profound in its simplicity yet revolutionary in its implications: each human being embodies humanity itself. Therefore, the infrastructure we build to augment human intelligence must be conceived not as external machinery, but as an extension of our collective nervous system.

When we invest in AI data centres, we invest in the very capacity of the human species to think, to dream, to solve the existential challenges that confront us.

AI Data Center Statistics and Trends | Image credi: The Network Installers

Global Market Dynamics

The most conservative estimates place the 2024 market at USD 13-18 billion, whilst comprehensive assessments including broader infrastructure components estimate USD 167-236 billion.

By 2030-2034, projections converge on a range of USD 93-934 billion, dependent upon the inclusion of ancillary services, networking infrastructure, and peripheral systems (Fortune Business Insights, 2024; Markets and Markets, 2025; Precedence Research, 2025).

North America maintains market dominance with 33-40% market share, driven by concentrated hyperscale deployments in Virginia, Texas, Oregon, and California. The United States hosts 51% of the world’s hyperscale AI data centres, with Virginia’s “Data Centre Alley” alone accounting for 26% of US data centre electricity consumption in 2023 (Pew Research Centre, 2025).

Asia Pacific emerges as the fastest-growing region with projected CAGR of 23-28%, fuelled by China’s USD 100 billion “New Infrastructure” initiative, Singapore’s smart nation strategy, and India’s digital transformation programmes (Precedence Research, 2025). Europe, catalysed by France’s €109 billion AI investment and the EU AI Act’s regulatory framework, represents a strategic growth corridor balancing innovation with sustainability mandates.

This geographical distribution is not merely economic—it represents the geopolitical architecture of the 21st century.

Data sovereignty, computational supremacy, and AI capability now constitute national security imperatives alongside traditional military and economic metrics.

End-User Segmentation

The market segmentation reveals the breadth of AI’s transformative impact:

By End-User Industry (2024 Market Share):

- Technology & Cloud Providers: 48%

- Banking, Financial Services & Insurance (BFSI): 20-24%

- Healthcare & Life Sciences: Fastest growing segment (32.93% CAGR)

- Manufacturing & Industry 4.0: Expanding rapidly

- Media & Entertainment: Driven by generative AI content creation

- Automotive: Autonomous vehicle training and simulation

A Small History of Data Centers

Data centers, in large part, have followed the rise of computers and the rise of the internet. I’ll briefly discuss the history of these trends and how we got here.

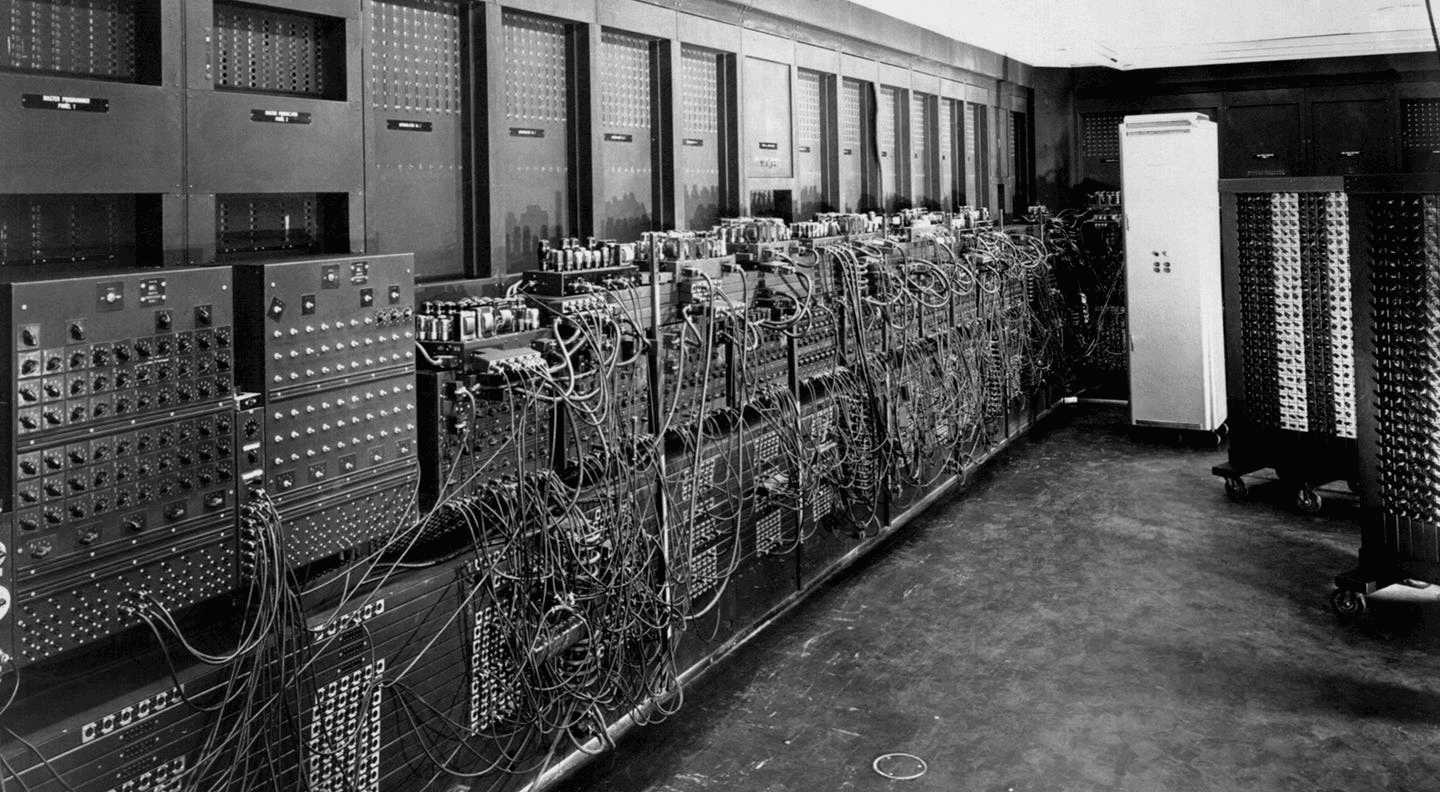

The Early History of Data Centers

The earliest versions of computing look similar to data centers today: a centralized computer targeted at solving a compute-intensive and critical task.

We have two early examples of this:

- The Colossus – The computer built by Alan Turing to break the Enigma machine. (Note: Turing is also considered the father of Artificial Intelligence and Computer Science. He proposed the Turing Test as a means of testing if AI is real, which ChatGPT passed last year).

- The ENIAC – The computer designed in WW2 by the US Military but not completed until 1946. The Colossus was built before the ENIAC, but the ENIAC is often considered the first computer because of the confidential nature of the Colossus.

Both were housed in what could be considered the “first data centers.”

In the 1950s, IBM rose to dominate computing with the mainframe computer. This would lead to their multi-decade dominance in technology, with AT&T being another of the dominant technology companies of the time.

The ARPANET, released in 1969, was developed as a way to connect the growing amounts of computers in the US. It’s now considered the earliest version of the internet. Given that it was a government project, its densest connections were around Washington, DC.

This was the root of Northern Virginia’s computing dominance. As each new generation of data centers was built, they wanted to use the existing infrastructure in place. That happened to be in the Northern Virginia region and still is today!

Data Center Markets| Image credit: TFE Times

The Rise of the Internet & the Cloud

In the 1990s, as the internet grew, we needed more physical infrastructure to process the increasing amount of Internet data. In part, this came in the form of data centers as interconnection points. Telecom providers like AT&T had already built out communications infrastructure, so it was a natural expansion for them.

However, these telecom companies had a similar co-opetition dynamic to vertically integrated cloud providers today. AT&T owned the data being passed through their infrastructure and the infrastructure itself. So, in the event of limited capacity, AT&T would prioritize its own data. Companies were wary of this dynamic, which led to the rise of data center companies like Digital Realty and Equinix.

Data centers saw massive investment throughout the dot com bubble, but that slowed significantly once the bubble burst (a lesson we should keep in mind when extrapolating data).

Data centers saw their slump start to reverse in 2006 with the release of Amazon Web Services. Since then, data center capacity has mostly steadily grown in the US.

US Data Center demand is forecast to grow by some 10 percent a year untill 2030 | Image Credit: network-king.net

Enter AI Data Centers

That steady growth continued until 2023, when the AI frenzy grabbed hold of us. Estimates now show data center capacity doubling by 2030 (keep in mind, these are estimates).

The unique workloads of AI training led to a renewed focus on data center scale. The closer computing infrastructure is together, the more performant it can be. Additionally, when data centers are designed as units of compute and not just rooms of servers, companies can get additional integration benefits.

Finally, since training doesn’t need to be close to end users, data centers can be built anywhere.

Summarizing AI data centers today: they’re focused on size, performance, cost, and they can be built anywhere

The demand for AI and data centers to double over next 10 years

Global AI and Power demand from data centers is projected to hit 106 gigawatts by 2035, according to a new report from BloombergNEF, a 36% increase from its previous estimate. Data centers use roughly 40 gigawatts today. This is just the beginning. As we become more addictive to AI

The AI industry’s massive growth has driven up demand for data centers that provide the computing power needed to run the software. “The growing power demand from data centers is likely to create an “inflection point for US grids,” BloombergNEF said.

Big Tech continues to shell out seemingly unlimited CapEx on AI, and a sizable chunk of that spending is going toward the data centers to scale it and meet growing demand. Microsoft spent $11.1 billion on data center leases last quarter, accounting for 31% of its overall spending.

Interestingly, BloombergNEF’s data center demand estimate may be somewhat conservative. Deloitte estimates data center demand would hit 176 GW by 2035, and Goldman Sachs projects data center demand to reach 92 GW by 2027 — a far higher growth rate than in BloombergNEF’s projection.

Growing demand is shifting the geography of data centers. As northern Virginia — historically the dominant region for data centers — becomes saturated, new projects are cropping up in southern and central Virginia, and data center projects in Georgia are moving further from Atlanta, the report notes. In Texas, former bitcoin mining sites are being repurposed for AI.

Energy grid capacity qualms aside, this report looks bullish for AI. The AI industry needs to grow rapidly to justify the accelerating capex and frothy valuations of AI companies. If data center demand truly does grow as quickly as BloombergNEF estimates, perhaps AI can keep the bubble from bursting.

But if smaller models become more prevalent and more efficient models like the new ones from DeepSeek disrupt the industry, it could undermine the need for data centers and result in a glut of capacity — something that seems unimaginable in the current environment.

Hyperscale Cloud Services (IaaS, PaaS, SaaS)

The 600 billion compute asset investment frontier is the new layer of the world physical economy

With the rise of artificial intelligence, cloud computing, and hyperscale operations, capital is increasingly flowing into data centers, GPUs, and the broader compute stack. Compute has become a strategic asset class, and investors are paying attention.

The Scale of the Opportunity

The global data center market generated over $340 billion in 2024 and is projected to exceed $450 billion by 2025. Forecasts show it reaching up to $624 billion by the end of the decade, with annual growth rates of 8–11%.

Some estimates suggest that total global investment into AI-driven compute infrastructure may reach $750 billion by 2026. This includes hyperscale centers, high-performance GPU clusters, and cloud capacity buildouts.

- Global data center market size expected to reach $624 billion by 2029

- AI infrastructure project pipeline approaching $750 billion

- Compute is becoming a long-term capital target for both institutional and sovereign investors

Investor Momentum

The world’s largest tech companies are scaling their infrastructure investments at an unprecedented rate. Microsoft is expected to spend over $80 billion in 2025 on data centers and AI compute. Alphabet, Amazon, and Meta together are allocating more than $130 billion in AI and cloud infrastructure in the same period.

Private capital is following closely. Private equity firms, hedge funds, and family offices are entering the space, leasing compute capacity, acquiring data center real estate, and backing new GPU providers.

Hyperscale operators

Hyperscale operators—led by Amazon Web Services (AWS), Microsoft Azure, Google Cloud Platform, and Alibaba Cloud—represent 64% of the AI data centre market in 2024 (Precedence Research, 2025). These titans of digital infrastructure have committed USD 450+ billion in near-term investments:

- Microsoft: USD 80 billion in fiscal year 2025, with over half allocated to US facilities

- Amazon AWS: USD 86-100 billion for AI-as-a-Service infrastructure

- Google/Alphabet: USD 75 billion globally, including USD 25 billion in the PJM Interconnection region

- Meta: USD 60-65 billion for AI-optimised facilities in Louisiana and Texas

The hyperscale model operates on economies of massive scale, achieving Power Usage Effectiveness (PUE) ratios as low as 1.09 (Google’s 2025 fleet average), compared to 1.4-1.8 for traditional enterprise data centres (Socomec, 2025). This efficiency advantage stems from:

- Custom-designed silicon (Google TPUs, AWS Graviton, Microsoft Maia)

- AI-optimised cooling systems achieving 30% energy reduction

- Advanced workload orchestration and dynamic resource allocation

- Vertical integration of hardware, software, and services

Revenue Model: Pay-as-you-go compute, storage, and AI service charges, typically billed by:

- GPU/TPU hours (USD 2-8 per GPU-hour depending on chip generation)

- Data storage (USD 0.02-0.10 per GB-month)

- Network egress (USD 0.08-0.12 per GB)

- AI inference tokens (USD 0.0001-0.01 per thousand tokens)

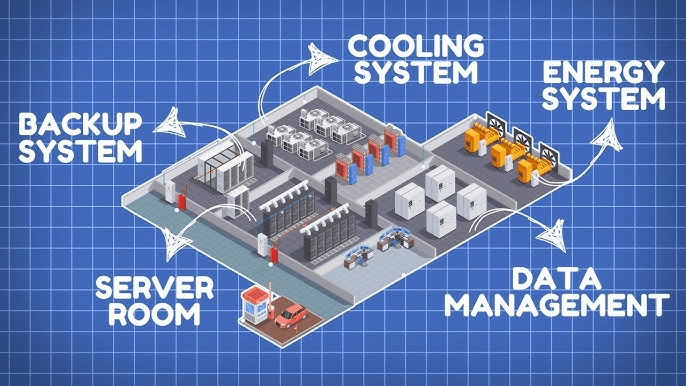

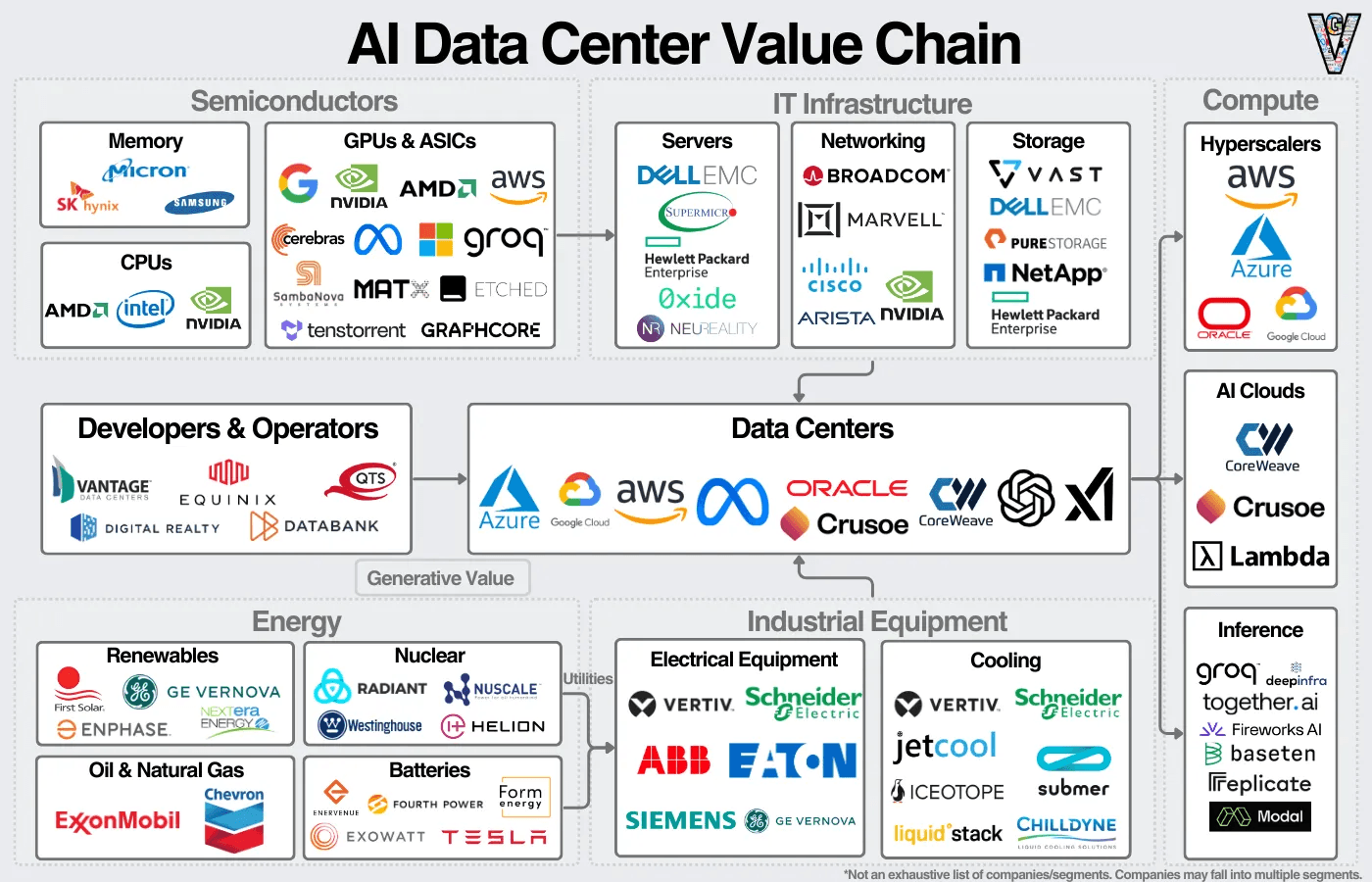

Building an AI data center

A compute provider (hyperscaler, AI company, GPU cloud) will either build the data center themselves or will work with a data center developer like Vantage, QTS, or Equinix to find a plot of land with energy capacity.

They’ll then hire a general contractor to manage the construction process who will hire subcontractors for each function (electrical, plumbing, HVAC) and purchase raw materials. Laborers will then move to the area for as long as the project is running. After “the shell” of the building is put up, the next step is installing equipment.

Image credit: Generative Value

3.2 Energy and Colocation Services: The Infrastructure Foundation

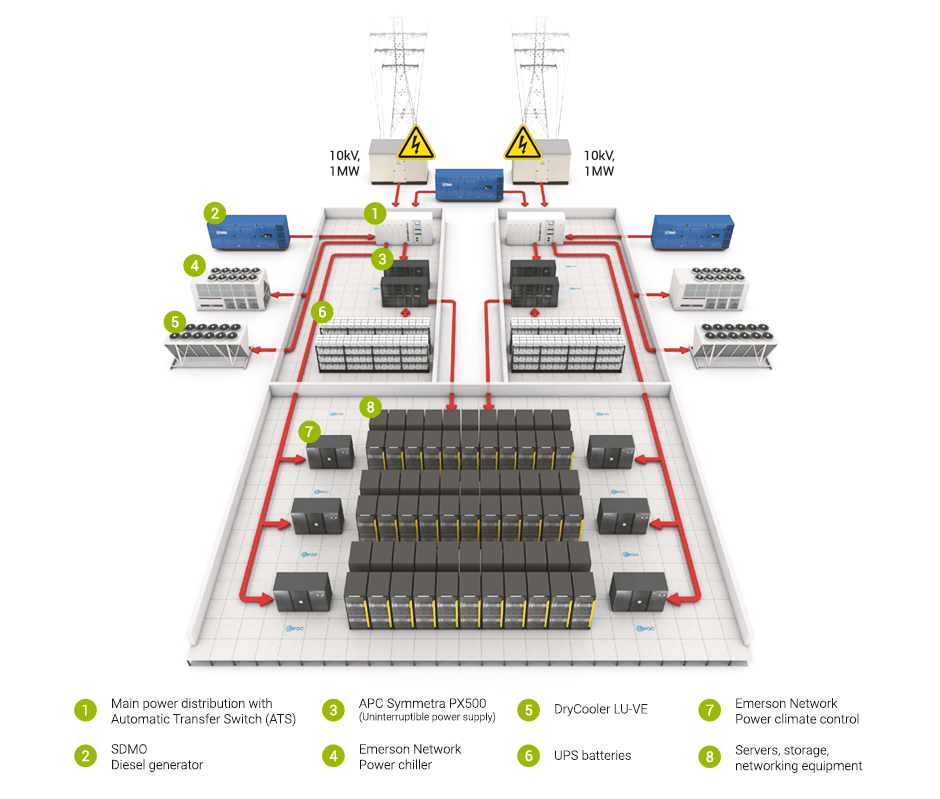

Powering an AI Data Center

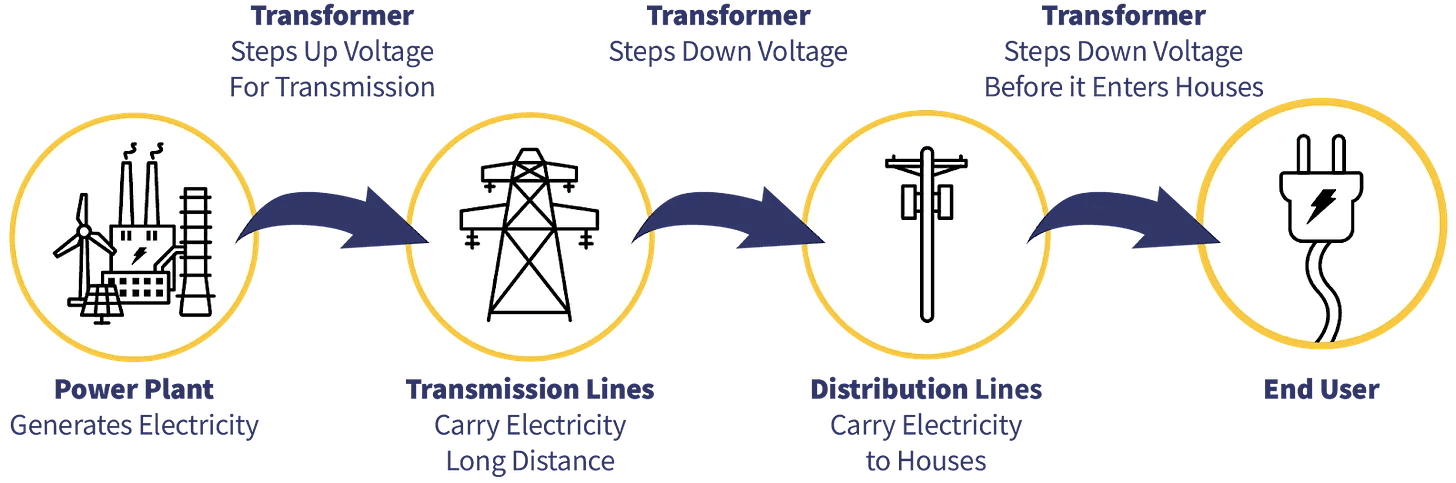

The energy supply chain can broadly be broken down into:

- Sources – Fossil fuels, renewables, and nuclear that can generate power.

- Generation – Power plants turn fossil fuels into electricity; for renewables, this happens much closer to the source.

- Transmission – Electricity is then transmitted via high-voltage lines close to the destination. Transformers and substations will take high-voltage energy and knock it down to be manageable for consumption.

- Utilities/Distribution – Utilities will manage the last mile distribution and manage the delivery of power through power purchase agreements (PPAs).

The transmission and distribution is what’s commonly referred to as “the electric grid”, and are managed locally. Depending on the location, either one can be a bottleneck to energy delivery.

Energy is proving to be a key bottleneck in the AI data center buildout.

Colocation facilities provide the physical infrastructure—power, cooling, security, connectivity—whilst enterprise customers install and operate their own hardware. Market leaders including Equinix, Digital Realty, and CyrusOne achieve average revenue of USD 800-1,200 per kilowatt annually, with premium AI-ready suites commanding USD 1,500-2,000/kW (Investbase, 2024).

The colocation model appeals to enterprises requiring:

- Direct hardware control for security or compliance

- Hybrid cloud architectures bridging on-premises and cloud

- Geographic distribution for latency optimisation

- Capital preservation whilst accessing hyperscale infrastructure

Revenue Structure:

- Monthly recurring revenue (MRR) from space, power, and cooling

- Cross-connects and network connectivity fees

- Managed services and remote hands support

- Value-added services (security, compliance, backup)

Colocation facilities demonstrate strong financial characteristics:

- 70-95% occupancy rates within 1-3 years of operation

- 95%+ customer retention rates

- Long-term contracts (typically 3-10 years) providing revenue visibility

- Levered IRR of 9-12% over 10-year investment horizons

3.3 GPU-as-a-Service (GPUaaS): Democratising AI Compute

The emergence of GPUaaS providers—including Lambda Labs, CoreWeave, and Together AI—addresses the critical bottleneck of GPU availability. NVIDIA’s dominance in AI accelerators (estimated 80%+ market share for training workloads) has created supply constraints, with H100 and H200 GPUs commanding premium pricing and extended lead times.

GPUaaS operators deploy capital-intensive infrastructure (USD 3.9 million average cost per AI rack in 2025) and lease access on flexible terms:

- On-demand: USD 2-4 per GPU-hour for H100, scaling to USD 8-10 for GB200

- Reserved capacity: 20-40% discounts for committed usage

- Spot instances: 50-70% discounts for interruptible workloads

This model proves particularly attractive for:

- AI startups lacking capital for infrastructure investment

- Research institutions requiring burst capacity

- Enterprises experimenting with AI before committing to dedicated infrastructure

3.4 Integrated Solutions and Systems Integrators

Companies including Dell Technologies, Hewlett Packard Enterprise (HPE), and Supermicro offer turnkey AI data centre solutions, managing the complete value chain from design through operation. This model appeals to organisations seeking:

- Single-vendor accountability and integrated support

- Pre-validated reference architectures

- Faster time-to-deployment than DIY approaches

- Ongoing optimisation and lifecycle management

Revenue derives from:

- Hardware sales (30-40% gross margins)

- Professional services (20-30% gross margins)

- Managed services contracts (recurring revenue)

- Software licensing and platform fees

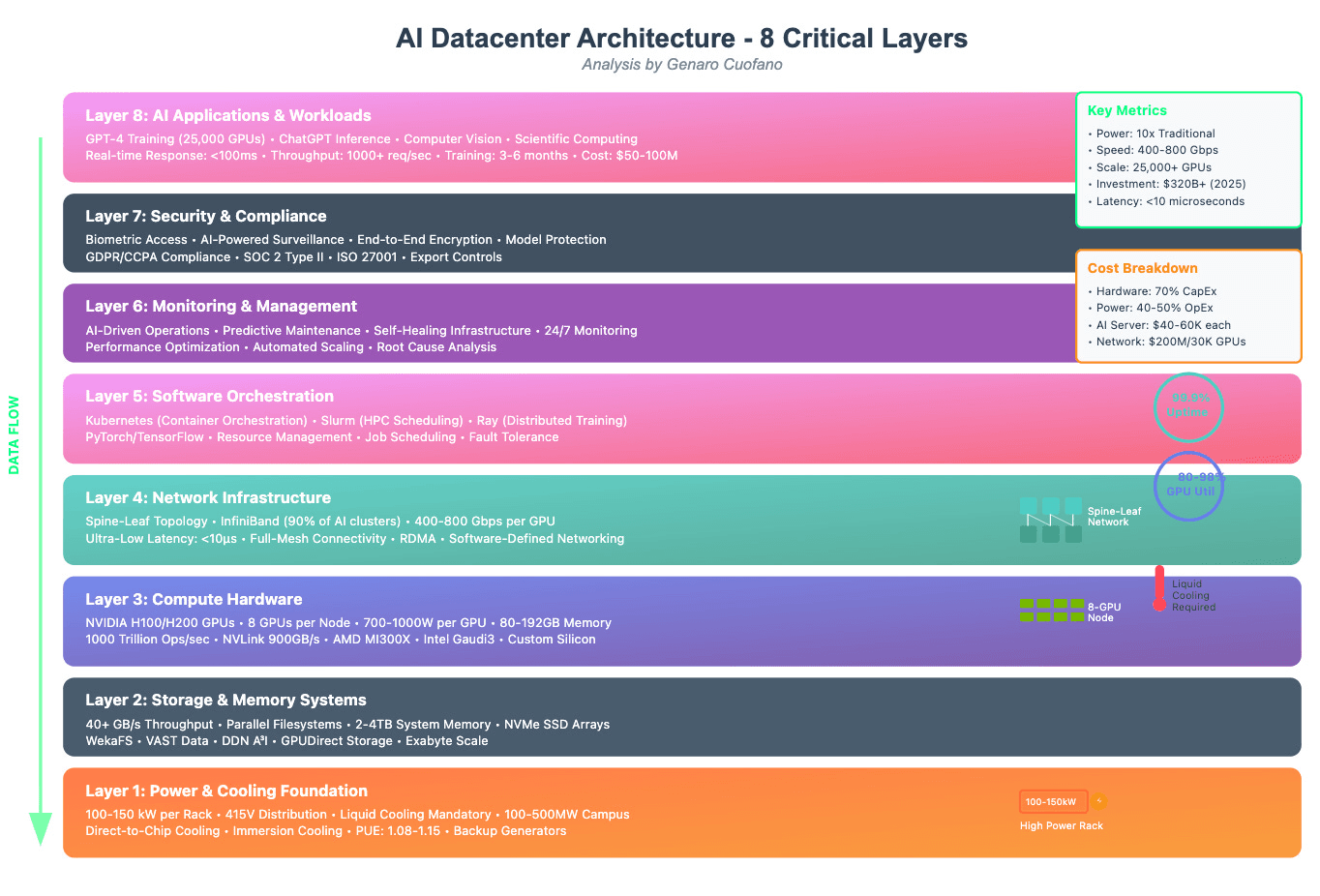

AI Architecture and Infrastructure The Foundations of The Digital Physical Cathedral of Our Age

We stand at civilisation’s threshold, constructing the infrastructure that will define humanity’s trajectory for centuries to come. The AI data centre is the cathedral of our age—not built to glorify abstract deities, but to honour the infinite potential of human consciousness itself.

The financial models presented in this analysis—the CAGR projections, the IRR calculations, the NPV estimates—are necessary but insufficient. They capture economic value but fail to measure human value: the diseases diagnosed and cured through AI-accelerated drug discovery, the scientific breakthroughs achieved through computational modelling impossible in human timescales, the creative works generated through human-AI collaboration pushing artistic boundaries.

For government partners: Your role transcends economic development—you are architecting national cognitive capability, ensuring your citizens possess the computational infrastructure to compete and thrive in an AI-transformed world. Your investment in AI data centres is investment in human potential itself.

For private capital partners: Your returns should measure not merely financial profit but contribution to human flourishing. The most successful ventures will balance commercial viability with mission-driven purpose, creating sustainable business models that serve both shareholder returns and stakeholder wellbeing.

For humanity collectively: The AI data centres we build today are the scaffolding upon which future generations will construct civilisational achievements we can barely imagine. We are planting computational forests whose fruits our grandchildren will harvest.

The thesis that animated this analysis bears repeating in conclusion: Each human being embodies humanity itself. When we invest in AI infrastructure, we invest in the collective computational capacity of our species. The servers humming in these data centres are not separate from us—they are extensions of human cognition, amplifications of human intelligence, manifestations of human will.

The financial opportunity is extraordinary: a market growing from USD 167 billion to nearly USD 1 trillion within six years. But human opportunity transcends economics—it is nothing less than the conscious evolution of our species, the deliberate enhancement of human cognitive capacity through silicon partners.

Let us build wisely. Let us build sustainably. Let us build with the recognition that these cathedrals of computation serve not artificial intelligence, but human intelligence—not machines, but the humans who dream through machines.

The 5th Industrial Revolution beckons. The infrastructure decisions we make today will echo through centuries. May we prove worthy of this extraordinary responsibility and extraordinary opportunity.